How to Choose the Best Model for Marketing Prediction

First in a series on how and why conDati choses the prediction models we use to improve your marketing performance.

Marketers have had to rely on instinct and experiential knowledge alone to produce winning, hopefully profitable campaigns. In addition, months would go by without any solid information and when they did get numbers, accuracy was always a problem.

Not having enough information isn’t really a problem anymore. Today, marketers aren’t just swamped by data—they are paralyzed by it and at the same time, required to extract meaningful insight from multiple (up to over a dozen) data streams in a timely manner.Given this situation, we took on the challenge of extracting the valuable signals from the noise in these combined data streams—and do so in a cost-effective way in real-time— in order to break through this paralysis. Here’s how we did that:

Necessary Parameters - Assessing Needs and Wants

When we listened to marketing professionals, they told us they wanted a solution that would be able to:

-

alert when things weren’t working as planned

-

forecast demand

-

reveal underlying trends and seasonality

-

show verifiable impact from marketing campaigns

To get to that solution, we worked toward creating a prediction model which would address each of the above needs.

Knowing what solution we needed to address opened the door to our being able to address the model we would eventually provide for our clients. Understanding the parameters of their needs, we knew we needed a model whose structural components (for example: trend, seasonality, holiday effects) would be:

-

easy to understand

-

robust to outliers and missing data

-

performant for long-term forecasts

-

extensible to include other factors such as competition and demand signals

Each of these requirements created a unique set of constraints that eventually helped us with our choice.

No Perfect Model

There’s an aphorism in statistics that says: “all models are wrong and some are useful.” Perhaps one way to think about this is to realize that a map is a model of the city you are traversing. It’s useful, but it’s an approximation of the city. It’s never going to be as complex or as “real” as the actual city.

When we build models, we do so to help us understand a real-world problem. The models help us solve problems and answer questions and so, at their best, are useful. But, just as the map of the city you are in will never be an exact replica of that city, the art of building a model requires us to find the best approximation of the real-life situation we need to better understand.

Easy to Understand

The fact that our clients required a model that was easy to understand should be self-explanatory. However, this one constraint, perhaps over the others, may need more of an explanation.

Our clients needed a prediction model that was not a black box, one that had transparency. Many commonly used time series models are in fact what is known as “black box” models. We know the input and the output but we can’t see the “inner workings” of the model. This becomes a problem because although these models can perform better in terms of prediction accuracy, they do not have the same level of interpretability.

And interpretability is a key advantage conDati brings to our clients. In other words, we supply results presented to our clients in language that is relevant to their reporting needs. In order to do this, we needed to ditch the black box and provide more transparency in our chosen predictive model.

In this case, NASA (Ritchie Lee, Mykel J. Kochenderfer, Ole J. Mengshoel, and Joshua Silbermann, 2017) said it best: In order for a model to be useful to our clients, “humans must be able to understand and reason about the information captured by the model.” We agree. Some compare the importance of interpretability in machine learning to the idea of reproducibility in scientific research, which is now considered standard but was not always. We feel that it is essential for the marketing experts who use conDati to be able to validate the model’s output and engender trust in our product.

Robust to Outliers and Missing Data

The data sets that we would be combining for marketing teams come from sources ranging from Google Analytics to Shopify to Marketo to Hubspot. The model chosen would need to be able to reliably handle data sets where the input data contains outliers and is incomplete as is often the case with our clients’ data streams.

In essence, what we want to do is extract the different streams of data so that we can correlate the outcome can be caused by the basic heterogeneous behavior of individuals as well as the external factors in the environment. I’ve seen markets influenced by product availability, competition, seasonality and the weather. When you then add on the further external complexity of multiple digital marketing channels the challenge of finding a modeling technique that would fit all the needs of today’s marketing professional becomes more clear.

Performant Long-Term Forecasting

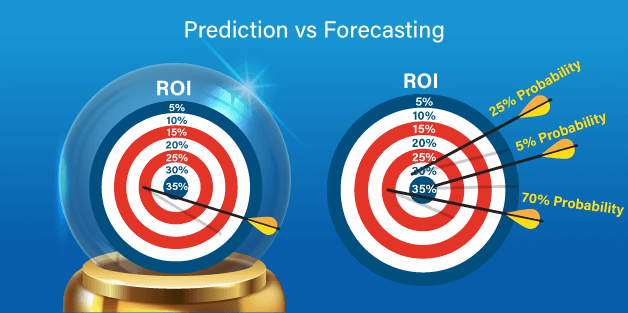

Building time series models that reliably forecast future values (time t + k, k >0) based on observations until time (t) was a necessary parameter for our models. A subset of prediction, forecasting uses probabilistic methods to make statements about historic data trends.

To help with understanding these terms, we can distinguish between prediction and forecasting by noting that though it is impossible to predict a purchase (we can’t say for certain that a user will purchase our product on a specific date in a specific place) we can forecast the number of purchases that will probably occur within a period of time in the future in a geographic (or virtual) location.

At conDati, we built models that take historic marketing data trends blended from our customers sources to forecast future scenarios — ultimately providing marketing professionals with a basis to guide decision making.

Extensible

Last, we needed our model to be extensible. We needed a model that could flex and grow and adapt to the specific reporting needs of our clients. We needed to be able to add metrics, giving a more complete reporting result than has been possible in marketing up to this point.

Harvesting Insight Using Time-Tested Methods

Obviously, there are many time series modeling techniques to choose from when modeling a market. We built tried and applied several including Holtz-Winters, LMS, Exponential, ARIMA and neural networks before we landed on structural time series (STM) models.

In the end, we chose a structural time series models which neatly address our collective wants and needs. Structural time series models are a subset of a larger class of modeling techniques called state space models. These models handle non-normal distributions well, are stochastic (non-deterministic...in other words, we can’t reliably predict exactly what will happen in the future) and also decompose, which gives us the benefit of being able to forecast for different trends important to our clients.

Marketing Time Series Model Chosen... Just the Beginning

Once we chose to work with a structural time series model, that was the beginning of the journey to the best fit solution for marketing teams from industries ranging from ecommerce to higher education, to B2B, to digital media.

Continue on this journey with us in part two in this series, where we share in more depth the strategy that led us to the structural time series model through a discussion on long-term trends in business.

“Since all models are wrong the scientist cannot obtain a "correct" one by excessive elaboration. On the contrary following William of Occam he should seek an economical description of natural phenomena. Just as the ability to devise simple but evocative models is the signature of the great scientist so over elaboration and over parameterization is often the mark of mediocrity.” -- George E.P. Box